Building an AI-powered spam detection bot for Discord

As mentioned in my previous tutorial Building an AI-powered Discord support bot, I'm in quite a few different community Slack and Discord servers, and again I've noticed something, there seems to be a growing number of people who sign up advertising their services, which tends to clog up the channels and gives off a bad experience for geniuine users. Manual moderation is exhausting, and by the time you catch it, the damage is done. Your focus should just be on supporting those who need help.

It bugs me when I'm in servers that this is happening. I understand time is limited and there needs to be an automated or semi automated ability to manage this problem.

What if your Discord server had a bot that automatically detected spam using both pattern matching and AI? A bot that learns from your moderation decisions and gets smarter over time? That's exactly what we're building today.

What you'll build

By the end of this tutorial, you'll have a production-ready Discord bot that:

- Monitors all channels for potential spam automatically

- Uses heuristic detection to catch common spam patterns (job postings, self-promotion, contact harvesting)

- Leverages Claude AI for borderline cases and context-aware analysis

- Tracks message history to identify repeat offenders

- Sends alerts to a moderation channel with detailed analysis and one-click actions

- Provides moderation buttons to approve, warn, or kick users

- Gracefully handles errors without crashing your bot

The bot uses a hybrid approach: fast heuristics catch obvious spam, while Claude analyzes nuanced cases. This keeps costs low while maintaining high accuracy.

Prerequisites

Before we begin, you'll need:

- Node.js 18+ installed

- TypeScript familiarity (we'll use strict mode)

- Discord account and a server where you have admin permissions

- Anthropic API key (sign up at console.anthropic.com)

- Basic understanding of Discord bots (we'll cover the setup)

Project setup

Let's start by creating our project structure.

mkdir discord-spam-detector

cd discord-spam-detector

npm init -y

Install the dependencies:

npm install discord.js @anthropic-ai/sdk better-sqlite3 dotenv

npm install -D typescript @types/node @types/better-sqlite3 tsx

What we're using:

discord.js- The official Discord API wrapper@anthropic-ai/sdk- Claude AI integrationbetter-sqlite3- Fast, synchronous SQLite for message historydotenv- Environment variable managementtsx- TypeScript execution with hot reload for development

Initialize TypeScript:

npx tsc --init

Update your tsconfig.json:

{

"compilerOptions": {

"target": "ES2022",

"module": "Node16",

"moduleResolution": "node16",

"lib": ["ES2022"],

"outDir": "./dist",

"rootDir": "./src",

"strict": true,

"esModuleInterop": true,

"skipLibCheck": true,

"forceConsistentCasingInFileNames": true,

"resolveJsonModule": true,

"declaration": true,

"sourceMap": true

},

"include": ["src/**/*"],

"exclude": ["node_modules", "dist"]

}

Update package.json scripts:

{

"type": "module",

"scripts": {

"dev": "tsx watch src/index.ts",

"build": "tsc",

"start": "node dist/index.js"

}

}

Setting up your Discord bot

Head over to the Discord Developer Portal and create a new application. This will be your bot.

Enable privileged intents

Your bot needs special permissions to read message content. In the "Bot" section:

- Scroll to Privileged Gateway Intents

- Enable Message Content Intent ✅

- Enable Server Members Intent ✅

Without these, your bot won't be able to read messages or access member information.

Generate an invite link

In the "OAuth2 > URL Generator" section, select:

Scopes:

botapplications.commands

Bot Permissions:

- Read Messages/View Channels

- Send Messages

- Manage Messages

- Kick Members

- Read Message History

Copy the generated URL and open it in your browser to add the bot to your server. Choose a test server first because you don't want to test spam detection on your main community!

Grab your credentials

You'll need:

- Bot Token - From the "Bot" section

- Client ID - From the "General Information" section

- Mod Channel ID - Create a private channel for spam alerts, then right-click it and "Copy Channel ID" (enable Developer Mode in Discord settings first)

Configuration management

Create a .env file in your project root:

# Discord Bot Configuration

DISCORD_TOKEN=your_bot_token_here # Bot token from Discord Developer Portal

DISCORD_CLIENT_ID=your_client_id_here # Application ID from Discord Developer Portal

MOD_CHANNEL_ID=your_mod_channel_id_here # Channel ID where spam alerts will be sent

# Anthropic API Key

ANTHROPIC_API_KEY=your_anthropic_api_key_here # Get this from console.anthropic.com

# Detection Thresholds (optional - these are defaults)

HEURISTIC_THRESHOLD=5 # Minimum spam score to flag (higher = less sensitive)

AI_THRESHOLD=0.7 # AI confidence needed to flag as spam (0-1 scale)

SIMILARITY_THRESHOLD=0.7 # How similar messages must be to count as duplicates (0-1 scale)

HISTORY_DAYS=7 # How many days of message history to keep

Now create src/config.ts to load these values:

import 'dotenv/config';

function requireEnv(name: string): string {

const value = process.env[name];

if (!value) {

throw new Error(`Missing required environment variable: ${name}`);

}

return value;

}

function optionalEnv(name: string, defaultValue: string): string {

return process.env[name] ?? defaultValue;

}

export const config = {

discord: {

token: requireEnv('DISCORD_TOKEN'),

clientId: requireEnv('DISCORD_CLIENT_ID'),

},

modChannelId: requireEnv('MOD_CHANNEL_ID'),

anthropic: {

apiKey: requireEnv('ANTHROPIC_API_KEY'),

},

thresholds: {

heuristic: parseInt(optionalEnv('HEURISTIC_THRESHOLD', '5'), 10),

ai: parseFloat(optionalEnv('AI_THRESHOLD', '0.7')),

similarity: parseFloat(optionalEnv('SIMILARITY_THRESHOLD', '0.7')),

},

historyDays: parseInt(optionalEnv('HISTORY_DAYS', '7'), 10),

};

This validates required variables at startup. It's better to fail fast than discover missing config when spam arrives!

Database schema

We need to track message history to detect repeat offenders. SQLite is perfect for this because there is no separate database server required.

Create src/database/index.ts:

import Database, { type Database as DatabaseType } from 'better-sqlite3';

import path from 'path';

import { fileURLToPath } from 'url';

const __dirname = path.dirname(fileURLToPath(import.meta.url));

const dbPath = path.join(__dirname, '..', '..', 'moderation.db');

export const db: DatabaseType = new Database(dbPath);

export function initDatabase(): void {

// Enable WAL mode for better concurrent access

db.pragma('journal_mode = WAL');

// Create messages table for history tracking

db.exec(`

CREATE TABLE IF NOT EXISTS messages (

id TEXT PRIMARY KEY,

user_id TEXT NOT NULL,

channel_id TEXT NOT NULL,

guild_id TEXT NOT NULL,

content TEXT NOT NULL,

created_at INTEGER NOT NULL

)

`);

// Create moderation log table

db.exec(`

CREATE TABLE IF NOT EXISTS moderation_log (

id INTEGER PRIMARY KEY AUTOINCREMENT,

message_id TEXT NOT NULL,

user_id TEXT NOT NULL,

action TEXT NOT NULL,

moderator_id TEXT NOT NULL,

created_at INTEGER NOT NULL

)

`);

// Create index for fast user history lookups

db.exec(`

CREATE INDEX IF NOT EXISTS idx_messages_user_time

ON messages(user_id, created_at)

`);

// Create index for guild-based queries

db.exec(`

CREATE INDEX IF NOT EXISTS idx_messages_guild

ON messages(guild_id, created_at)

`);

console.log('Database initialized');

}

export function cleanOldMessages(daysToKeep: number): void {

const cutoff = Date.now() - daysToKeep * 24 * 60 * 60 * 1000;

const stmt = db.prepare('DELETE FROM messages WHERE created_at < ?');

const result = stmt.run(cutoff);

if (result.changes > 0) {

console.log(`Cleaned up ${result.changes} old messages`);

}

}

The indexes are crucial, without them, looking up a user's message history would scan the entire table. With indexes, it's instant even with thousands of messages.

Now create src/database/models.ts for our data access layer:

import { db } from './index.js';

import { config } from '../config.js';

import type { Statement } from 'better-sqlite3';

export interface StoredMessage {

id: string;

userId: string;

channelId: string;

guildId: string;

content: string;

createdAt: number;

}

export interface ModerationLogEntry {

messageId: string;

userId: string;

action: 'approved' | 'spam' | 'spam_kick';

moderatorId: string;

}

// Prepared statements for better performance

let insertMessageStmt: Statement;

let getUserMessagesStmt: Statement;

let insertModerationLogStmt: Statement;

let deleteMessageStmt: Statement;

export function initModels(): void {

insertMessageStmt = db.prepare(`

INSERT OR REPLACE INTO messages (id, user_id, channel_id, guild_id, content, created_at)

VALUES (@id, @userId, @channelId, @guildId, @content, @createdAt)

`);

getUserMessagesStmt = db.prepare(`

SELECT id, user_id as userId, channel_id as channelId, guild_id as guildId, content, created_at as createdAt

FROM messages

WHERE user_id = ? AND guild_id = ? AND created_at > ?

ORDER BY created_at DESC

`);

insertModerationLogStmt = db.prepare(`

INSERT INTO moderation_log (message_id, user_id, action, moderator_id, created_at)

VALUES (@messageId, @userId, @action, @moderatorId, @createdAt)

`);

deleteMessageStmt = db.prepare(`

DELETE FROM messages WHERE id = ?

`);

}

function ensureInitialized(): void {

if (!insertMessageStmt || !getUserMessagesStmt || !insertModerationLogStmt || !deleteMessageStmt) {

throw new Error('Database models not initialized. Call initModels() first.');

}

}

export function saveMessage(message: StoredMessage): void {

ensureInitialized();

insertMessageStmt.run(message);

}

export function getUserRecentMessages(userId: string, guildId: string): StoredMessage[] {

ensureInitialized();

const cutoff = Date.now() - config.historyDays * 24 * 60 * 60 * 1000;

return getUserMessagesStmt.all(userId, guildId, cutoff) as StoredMessage[];

}

export function logModerationAction(entry: ModerationLogEntry): void {

ensureInitialized();

insertModerationLogStmt.run({

...entry,

createdAt: Date.now(),

});

}

export function deleteStoredMessage(messageId: string): void {

ensureInitialized();

deleteMessageStmt.run(messageId);

}

Prepared statements are a best practice with SQLite because they're pre-compiled and significantly faster than creating new queries for each operation.

Heuristic spam detection

Before we involve AI (and incur API costs), let's catch obvious spam with pattern matching. This heuristic approach is fast, free, and should catch most of the spam. We use keyword matching, pattern recognition, and message analysis to build a spam score.

Create src/detection/heuristics.ts and start with the keyword definitions:

import type { Message } from 'discord.js';

export interface HeuristicResult {

score: number;

reasons: string[];

}

// Keywords commonly found in job spam / self-promotion

const SPAM_KEYWORDS = [

// Job/work related

'remote work',

'work from home',

'daily pay',

'flexible hours',

'hiring',

'freelancer',

'freelancers needed',

'job opportunity',

'work opportunities',

// Self-promotion phrases

"i'm a developer",

"i'm an engineer",

'my services',

'years of experience',

'i can help you',

"let's talk",

"let's connect",

'dm me',

'reach out',

'contact me',

'book a call',

// Tech buzzwords in promotional context

'ai automation',

'ai agent',

'custom ai',

'llm integration',

'production-ready solutions',

// Common spam patterns

'looking for projects',

'looking for opportunities',

'available for hire',

'open for work',

"if you're looking",

'i specialize in',

'my expertise',

];

// High-weight phrases that are strong indicators

const HIGH_WEIGHT_PHRASES = [

'daily pay',

'freelancers needed',

'dm me for',

'book a call',

'available for hire',

'looking for clients',

];

// Patterns that indicate promotional content

const PROMO_PATTERNS = [

/\d+\s*\+?\s*years?\s*(of\s*)?(experience|exp)/i,

/(?:morning|evening|night)\s*shift/i,

/(?:am|pm)\s*to\s*(?:am|pm)/i,

/\$\d+(?:\/hr|\/hour|\/day|k)?/i,

/(?:senior|junior|lead)\s+(?:developer|engineer|designer)/i,

];

// Contact info patterns

const CONTACT_PATTERNS = [

/[\w.+-]+@[\w-]+\.[\w.-]+/, // Email

/(?:discord|telegram|whatsapp)\s*[:#]?\s*[\w@#]+/i, // Messaging handles

];

These keyword lists are based on real spam patterns. You can customize them for your community such as add terms specific to your server or remove ones that are legitimate in your context.

Now add the scoring function to the same file:

export function analyzeHeuristics(message: Message): HeuristicResult {

const content = message.content.toLowerCase();

const reasons: string[] = [];

let score = 0;

// Check for spam keywords

for (const keyword of SPAM_KEYWORDS) {

if (content.includes(keyword.toLowerCase())) {

score += 1;

reasons.push(`Contains keyword: "${keyword}"`);

}

}

// Check high-weight phrases (additional points)

for (const phrase of HIGH_WEIGHT_PHRASES) {

if (content.includes(phrase.toLowerCase())) {

score += 2;

reasons.push(`Contains high-weight phrase: "${phrase}"`);

}

}

// Check promotional patterns

for (const pattern of PROMO_PATTERNS) {

if (pattern.test(content)) {

score += 2;

reasons.push(`Matches promotional pattern: ${pattern.source}`);

}

}

// Check for contact info

for (const pattern of CONTACT_PATTERNS) {

if (pattern.test(content)) {

score += 1;

reasons.push('Contains contact information');

}

}

// Check message length (long promotional messages)

if (message.content.length > 500) {

score += 1;

reasons.push('Long message (>500 chars)');

}

if (message.content.length > 1000) {

score += 1;

reasons.push('Very long message (>1000 chars)');

}

// Check for excessive emojis (common in promotional content)

const emojiCount = (message.content.match(/\p{Emoji}/gu) || []).length;

if (emojiCount > 5) {

score += 1;

reasons.push(`Excessive emojis (${emojiCount})`);

}

// Check for bullet points / list formatting (common in self-promotion)

const bulletCount = (message.content.match(/^[\s]*[-•*]\s/gm) || []).length;

if (bulletCount > 3) {

score += 1;

reasons.push(`List formatting (${bulletCount} bullets)`);

}

// Check for tech stack lists

const techStackMatch = content.match(

/(?:react|node|python|javascript|typescript|aws|docker|kubernetes|openai|claude|gpt)/gi

);

if (techStackMatch && techStackMatch.length > 4) {

score += 2;

reasons.push(`Tech stack listing (${techStackMatch.length} technologies)`);

}

return { score, reasons };

}

The scoring is additive, each indicator adds points. Regular keywords add 1 point, high-confidence phrases add 2, and patterns like pricing or tech stacks add 2. A score of 5+ (the default threshold) triggers a spam alert.

Text similarity detection

Spammers often post the same message repeatedly. Let's detect that. Create src/utils/similarity.ts:

/**

* Calculate Jaccard similarity between two texts.

* Returns a value between 0 (no similarity) and 1 (identical).

*/

export function jaccardSimilarity(text1: string, text2: string): number {

const words1 = tokenize(text1);

const words2 = tokenize(text2);

if (words1.size === 0 && words2.size === 0) return 1;

if (words1.size === 0 || words2.size === 0) return 0;

const intersection = new Set([...words1].filter((w) => words2.has(w)));

const union = new Set([...words1, ...words2]);

return intersection.size / union.size;

}

/**

* Tokenize text into a set of normalized words.

*/

function tokenize(text: string): Set<string> {

return new Set(

text

.toLowerCase()

.replace(/[^\w\s]/g, ' ')

.split(/\s+/)

.filter((word) => word.length > 2)

);

}

/**

* Calculate n-gram similarity for better detection of rearranged text.

*/

export function ngramSimilarity(text1: string, text2: string, n: number = 3): number {

const ngrams1 = getNgrams(text1.toLowerCase(), n);

const ngrams2 = getNgrams(text2.toLowerCase(), n);

if (ngrams1.size === 0 && ngrams2.size === 0) return 1;

if (ngrams1.size === 0 || ngrams2.size === 0) return 0;

const intersection = new Set([...ngrams1].filter((ng) => ngrams2.has(ng)));

const union = new Set([...ngrams1, ...ngrams2]);

return intersection.size / union.size;

}

function getNgrams(text: string, n: number): Set<string> {

const ngrams = new Set<string>();

const cleaned = text.replace(/\s+/g, ' ').trim();

for (let i = 0; i <= cleaned.length - n; i++) {

ngrams.add(cleaned.slice(i, i + n));

}

return ngrams;

}

/**

* Combined similarity score using both Jaccard and n-gram methods.

*/

export function combinedSimilarity(text1: string, text2: string): number {

const jaccard = jaccardSimilarity(text1, text2);

const ngram = ngramSimilarity(text1, text2);

// Weight: 60% Jaccard (word-level), 40% n-gram (character-level)

return jaccard * 0.6 + ngram * 0.4;

}

This catches spammers who slightly rephrase their messages. The combined approach handles both word reordering and character-level changes.

AI-powered contextual analysis

For messages that score in the gray area, we'll ask Claude to analyze them. Create src/detection/ai-analysis.ts:

import Anthropic from '@anthropic-ai/sdk';

import type { Message, TextChannel } from 'discord.js';

import { config } from '../config.js';

const anthropic = new Anthropic({

apiKey: config.anthropic.apiKey,

});

export interface AIAnalysisResult {

classification: 'spam' | 'likely_spam' | 'uncertain' | 'legitimate';

confidence: number;

reasoning: string;

channelRelevant: boolean;

}

export async function analyzeWithAI(

message: Message,

heuristicReasons: string[]

): Promise<AIAnalysisResult> {

const channelName = (message.channel as TextChannel).name || 'unknown';

const channelTopic = (message.channel as TextChannel).topic || 'No topic set';

const prompt = `You are a Discord moderation assistant. Analyze this message for spam/self-promotion.

Channel: #${channelName}

Channel Topic: ${channelTopic}

Author: ${message.author.username}

Message:

"""

${message.content}

"""

Heuristic flags already detected:

${heuristicReasons.map((r) => `- ${r}`).join('\n')}

Analyze this message and determine:

1. Is this spam or unwanted self-promotion?

2. Is this message relevant to the channel's stated purpose?

Common spam patterns in Discord servers:

- Job postings in non-job channels

- Self-promotional introductions listing services/skills for hire

- Copy-paste promotional content posted across multiple channels

- "DM me" or "let's talk" calls to action for services

Respond with JSON only:

{

"classification": "spam" | "likely_spam" | "uncertain" | "legitimate",

"confidence": 0.0-1.0,

"reasoning": "Brief explanation",

"channelRelevant": true/false

}`;

try {

const response = await anthropic.messages.create({

model: 'claude-sonnet-4-20250514',

max_tokens: 300,

messages: [

{

role: 'user',

content: prompt,

},

],

});

const text = response.content[0].type === 'text' ? response.content[0].text : '';

// Parse JSON from response

const jsonMatch = text.match(/\{[\s\S]*\}/);

if (!jsonMatch) {

console.error('Failed to parse AI response:', text);

return {

classification: 'uncertain',

confidence: 0.5,

reasoning: 'Failed to parse AI response',

channelRelevant: true,

};

}

const result = JSON.parse(jsonMatch[0]) as AIAnalysisResult;

return result;

} catch (error) {

console.error('AI analysis error:', error);

return {

classification: 'uncertain',

confidence: 0.5,

reasoning: 'AI analysis failed',

channelRelevant: true,

};

}

}

Claude can understand context, whether a tech stack listing belongs in #introductions or if "looking for work" fits the channel topic. This dramatically reduces false positives.

Detection pipeline

Now we tie together all the detection methods into a single pipeline. The pipeline runs heuristics first (fast and free), checks for similar messages, then decides whether to invoke Claude AI for a final verdict. This layered approach keeps costs low while maintaining high accuracy.

Create src/detection/index.ts and start with the types and main detection function:

import type { Message } from 'discord.js';

import { config } from '../config.js';

import { getUserRecentMessages, type StoredMessage } from '../database/models.js';

import { analyzeHeuristics, type HeuristicResult } from './heuristics.js';

import { analyzeWithAI, type AIAnalysisResult } from './ai-analysis.js';

import { combinedSimilarity } from '../utils/similarity.js';

export interface DetectionResult {

isSpam: boolean;

confidence: number;

reasons: string[];

heuristics: HeuristicResult;

aiAnalysis?: AIAnalysisResult;

similarMessages: StoredMessage[];

}

export async function detectSpam(message: Message): Promise<DetectionResult> {

const reasons: string[] = [];

// Stage 1: Heuristic analysis

const heuristics = analyzeHeuristics(message);

// Check for similar past messages

const similarMessages = findSimilarMessages(message);

if (similarMessages.length > 0) {

reasons.push(

`Found ${similarMessages.length} similar message(s) in the past ${config.historyDays} days`

);

}

// Determine if we need AI analysis

const needsAI =

heuristics.score >= config.thresholds.heuristic / 2 &&

heuristics.score < config.thresholds.heuristic * 2;

let aiAnalysis: AIAnalysisResult | undefined;

// If heuristic score is high enough, it's likely spam

if (heuristics.score >= config.thresholds.heuristic * 2) {

return {

isSpam: true,

confidence: Math.min(heuristics.score / 15, 1),

reasons: [...heuristics.reasons, ...reasons],

heuristics,

similarMessages,

};

}

// Stage 2: AI analysis for borderline cases

if (needsAI || similarMessages.length > 0) {

aiAnalysis = await analyzeWithAI(message, heuristics.reasons);

if (!aiAnalysis.channelRelevant) {

reasons.push('Message not relevant to channel topic');

}

if (

aiAnalysis.classification === 'spam' ||

aiAnalysis.classification === 'likely_spam'

) {

reasons.push(`AI: ${aiAnalysis.reasoning}`);

}

}

// Calculate final decision

const isSpam = calculateFinalDecision(

heuristics,

aiAnalysis,

similarMessages.length

);

const confidence = calculateConfidence(

heuristics,

aiAnalysis,

similarMessages.length

);

return {

isSpam,

confidence,

reasons: [...heuristics.reasons, ...reasons],

heuristics,

aiAnalysis,

similarMessages,

};

}

function findSimilarMessages(message: Message): StoredMessage[] {

if (!message.guild) return [];

const recentMessages = getUserRecentMessages(

message.author.id,

message.guild.id

);

// Filter to messages that are similar but not the same message

return recentMessages.filter((stored) => {

if (stored.id === message.id) return false;

const similarity = combinedSimilarity(stored.content, message.content);

return similarity >= config.thresholds.similarity;

});

}

The main detectSpam function orchestrates the detection flow: run heuristics, check message history, decide if AI is needed, then make a final determination. If the heuristic score is extremely high (2x the threshold), we skip AI entirely and flag it immediately.

Now add the decision logic helpers to the same file:

function calculateFinalDecision(

heuristics: HeuristicResult,

aiAnalysis: AIAnalysisResult | undefined,

similarCount: number

): boolean {

// High heuristic score = spam

if (heuristics.score >= config.thresholds.heuristic) {

return true;

}

// AI says spam with high confidence

if (

aiAnalysis &&

(aiAnalysis.classification === 'spam' ||

aiAnalysis.classification === 'likely_spam') &&

aiAnalysis.confidence >= config.thresholds.ai

) {

return true;

}

// Multiple similar messages = likely spam

if (similarCount >= 2) {

return true;

}

// Medium heuristic + similar message + AI suggests spam

if (

heuristics.score >= config.thresholds.heuristic / 2 &&

similarCount >= 1 &&

aiAnalysis?.classification !== 'legitimate'

) {

return true;

}

// Not relevant to channel + promotional content

if (

aiAnalysis &&

!aiAnalysis.channelRelevant &&

heuristics.score >= config.thresholds.heuristic / 2

) {

return true;

}

return false;

}

function calculateConfidence(

heuristics: HeuristicResult,

aiAnalysis: AIAnalysisResult | undefined,

similarCount: number

): number {

let confidence = 0;

// Heuristic contribution (up to 0.4)

confidence += Math.min(heuristics.score / 12, 0.4);

// AI contribution (up to 0.4)

if (aiAnalysis) {

if (

aiAnalysis.classification === 'spam' ||

aiAnalysis.classification === 'likely_spam'

) {

confidence += aiAnalysis.confidence * 0.4;

}

}

// Similar messages contribution (up to 0.2)

confidence += Math.min(similarCount * 0.1, 0.2);

return Math.min(confidence, 1);

}

These helpers combine multiple signals into a final verdict. The confidence calculation weights heuristics and AI equally (40% each), with message history contributing the remaining 20%. This ensures no single signal can dominate the decision.

Moderation queue

When spam is detected, we don't automatically delete it because that would lead to false positives annoying your community. Instead, we send a richly formatted alert to your moderation channel with all the detection details and three action buttons. Moderators can then make the final call.

Create src/moderation/queue.ts and start with the main sending function:

import {

ActionRowBuilder,

ButtonBuilder,

ButtonStyle,

EmbedBuilder,

Message,

TextChannel,

} from 'discord.js';

import { config } from '../config.js';

import type { DetectionResult } from '../detection/index.js';

export async function sendToModQueue(

message: Message,

detection: DetectionResult

): Promise<void> {

// Try to get from cache first, then fetch if not available

let modChannel = message.client.channels.cache.get(

config.modChannelId

) as TextChannel | undefined;

if (!modChannel) {

try {

modChannel = (await message.client.channels.fetch(

config.modChannelId

)) as TextChannel;

} catch (error) {

console.error(

`Failed to fetch moderation channel ${config.modChannelId}:`,

error

);

return;

}

}

const embed = buildModEmbed(message, detection);

const buttons = buildActionButtons(

message.id,

message.author.id,

message.channel.id

);

await modChannel.send({

embeds: [embed],

components: [buttons],

});

}

The main function fetches the mod channel (with caching for performance), builds the embed and buttons, and sends them. If the channel can't be found, we log an error and bail, better than crashing the bot.

Now add the embed builder to the same file. This creates the detailed spam alert:

function buildModEmbed(

message: Message,

detection: DetectionResult

): EmbedBuilder {

const embed = new EmbedBuilder()

.setColor(getConfidenceColor(detection.confidence))

.setTitle('Potential Spam Detected')

.setAuthor({

name: message.author.tag,

iconURL: message.author.displayAvatarURL(),

})

.addFields(

{

name: 'Channel',

value: `<#${message.channel.id}>`,

inline: true,

},

{

name: 'Confidence',

value: `${Math.round(detection.confidence * 100)}%`,

inline: true,

},

{

name: 'Heuristic Score',

value: `${detection.heuristics.score}`,

inline: true,

}

)

.setTimestamp(message.createdAt)

.setFooter({ text: `Message ID: ${message.id}` });

// Add message content (truncated if needed)

const content =

message.content.length > 1000

? message.content.slice(0, 1000) + '...'

: message.content;

embed.setDescription(`**Message:**\n${content}`);

// Add detection reasons

if (detection.reasons.length > 0) {

const reasonsText = detection.reasons

.slice(0, 10)

.map((r) => `• ${r}`)

.join('\n');

embed.addFields({

name: 'Detection Reasons',

value: reasonsText.slice(0, 1024),

});

}

// Add AI analysis if available

if (detection.aiAnalysis) {

embed.addFields({

name: 'AI Analysis',

value: `**${detection.aiAnalysis.classification}** (${Math.round(detection.aiAnalysis.confidence * 100)}%)\n${detection.aiAnalysis.reasoning}`,

});

if (!detection.aiAnalysis.channelRelevant) {

embed.addFields({

name: 'Channel Relevance',

value: 'Message appears off-topic for this channel',

});

}

}

// Add similar messages if found

if (detection.similarMessages.length > 0) {

const similarText = detection.similarMessages

.slice(0, 3)

.map((m) => {

const date = new Date(m.createdAt).toLocaleDateString();

const preview =

m.content.length > 100

? m.content.slice(0, 100) + '...'

: m.content;

return `• ${date} in <#${m.channelId}>: "${preview}"`;

})

.join('\n');

embed.addFields({

name: `Similar Messages (${detection.similarMessages.length} found)`,

value: similarText.slice(0, 1024),

});

}

// Add user info

const member = message.member;

if (member) {

const joinedAt = member.joinedAt

? `<t:${Math.floor(member.joinedAt.getTime() / 1000)}:R>`

: 'Unknown';

embed.addFields({

name: 'User Info',

value: `Joined: ${joinedAt}`,

inline: true,

});

}

// Add link to original message

embed.addFields({

name: 'Jump to Message',

value: `[Click here](${message.url})`,

inline: true,

});

return embed;

}

The embed includes everything moderators need: the message content, detection reasons, AI analysis, history of similar messages, and a direct link to jump to the original message. The color changes based on confidence (red = high confidence, green = low).

Finally, add the button and color helper functions to the same file:

function buildActionButtons(

messageId: string,

userId: string,

channelId: string

): ActionRowBuilder<ButtonBuilder> {

return new ActionRowBuilder<ButtonBuilder>().addComponents(

new ButtonBuilder()

.setCustomId(`mod_approve_${messageId}_${userId}_${channelId}`)

.setLabel('Approve')

.setStyle(ButtonStyle.Success)

.setEmoji('✅'),

new ButtonBuilder()

.setCustomId(`mod_spam_${messageId}_${userId}_${channelId}`)

.setLabel('Spam (Warn)')

.setStyle(ButtonStyle.Primary)

.setEmoji('⚠️'),

new ButtonBuilder()

.setCustomId(`mod_kick_${messageId}_${userId}_${channelId}`)

.setLabel('Spam (Kick)')

.setStyle(ButtonStyle.Danger)

.setEmoji('🚫')

);

}

function getConfidenceColor(confidence: number): number {

if (confidence >= 0.8) return 0xff0000; // Red - high confidence spam

if (confidence >= 0.6) return 0xff8800; // Orange

if (confidence >= 0.4) return 0xffff00; // Yellow

return 0x00ff00; // Green - low confidence

}

The buttons encode all necessary IDs in their custom ID string, so the button handler knows exactly what message and user to act on. This is more efficient than database lookups.

Button interaction handlers

When moderators click the buttons in the spam alert, we need to handle three actions: approving the message (false positive), warning the user and deleting the message, or kicking the user from the server. Each action logs the decision, updates the alert embed, and provides appropriate feedback.

Let's build the button handler system. Create src/moderation/actions.ts and start with the imports and main router function:

import {

ButtonInteraction,

EmbedBuilder,

PermissionFlagsBits,

TextChannel,

} from 'discord.js';

import {

logModerationAction,

deleteStoredMessage,

} from '../database/models.js';

export async function handleButtonInteraction(

interaction: ButtonInteraction

): Promise<void> {

const customId = interaction.customId;

if (!customId.startsWith('mod_')) return;

// Check if user has moderator permissions

if (!interaction.memberPermissions?.has(PermissionFlagsBits.ModerateMembers)) {

await interaction.reply({

content: 'You do not have permission to use moderation actions.',

ephemeral: true,

});

return;

}

const parts = customId.split('_');

if (parts.length < 4) return;

const action = parts[1]; // approve, spam, or kick

const messageId = parts[2];

const userId = parts[3];

const channelId = parts[4]; // Optional channel ID for faster lookup

await interaction.deferUpdate();

try {

switch (action) {

case 'approve':

await handleApprove(interaction, messageId, userId);

break;

case 'spam':

await handleSpamWarn(interaction, messageId, userId, channelId);

break;

case 'kick':

await handleSpamKick(interaction, messageId, userId, channelId);

break;

default:

console.error(`Unknown action: ${action}`);

}

} catch (error) {

console.error('Error handling moderation action:', error);

await interaction.followUp({

content: `Error: ${error instanceof Error ? error.message : 'Unknown error'}`,

flags: ['Ephemeral'],

});

}

}

The main handler validates permissions, parses the button ID, and routes to the appropriate action. The deferUpdate() call prevents Discord from showing a "This interaction failed" error while we process the action.

Now add the approve handler to the same file:

async function handleApprove(

interaction: ButtonInteraction,

messageId: string,

userId: string

): Promise<void> {

// Log the moderation decision

logModerationAction({

messageId,

userId,

action: 'approved',

moderatorId: interaction.user.id,

});

// Update the embed to show it's been approved

const originalEmbed = interaction.message.embeds[0];

const updatedEmbed = EmbedBuilder.from(originalEmbed)

.setColor(0x00ff00)

.setTitle('✅ Approved')

.addFields({

name: 'Action Taken',

value: `Approved by <@${interaction.user.id}>`,

});

await interaction.editReply({

embeds: [updatedEmbed],

components: [], // Remove buttons

});

}

The approve handler is simple because it logs the decision, updates the embed to green, and removes the buttons so it can't be actioned twice.

Next, add the spam warning handler to the same file:

async function handleSpamWarn(

interaction: ButtonInteraction,

messageId: string,

userId: string,

channelId?: string

): Promise<void> {

const guild = interaction.guild;

if (!guild) return;

// Check if bot has necessary permissions

if (!guild.members.me?.permissions.has(PermissionFlagsBits.ManageMessages)) {

await interaction.followUp({

content: 'Bot lacks "Manage Messages" permission to delete spam.',

ephemeral: true,

});

return;

}

// Find and delete the original message

const originalMessage = await findOriginalMessage(guild, messageId, channelId);

const channelName = originalMessage

? `#${(originalMessage.channel as TextChannel).name}`

: 'the server';

// DM the user

try {

const user = await interaction.client.users.fetch(userId);

await user.send({

embeds: [

new EmbedBuilder()

.setColor(0xff8800)

.setTitle('Message Removed')

.setDescription(

`Your message in ${channelName} on **${guild.name}** was removed as it appears to be spam or unwanted self-promotion.\n\nPlease review the server rules before posting again. If you believe this was a mistake, please contact a server moderator.`

)

.setTimestamp(),

],

});

} catch (error) {

console.log(`Could not DM user ${userId}:`, error);

}

// Delete the original message

if (originalMessage) {

try {

await originalMessage.delete();

} catch (error) {

console.log(`Could not delete message ${messageId}:`, error);

}

}

// Delete from our database

deleteStoredMessage(messageId);

// Log the moderation decision

logModerationAction({

messageId,

userId,

action: 'spam',

moderatorId: interaction.user.id,

});

// Update the embed

const originalEmbed = interaction.message.embeds[0];

const updatedEmbed = EmbedBuilder.from(originalEmbed)

.setColor(0xff8800)

.setTitle('⚠️ Marked as Spam')

.addFields({

name: 'Action Taken',

value: `Warned and message deleted by <@${interaction.user.id}>`,

});

await interaction.editReply({

embeds: [updatedEmbed],

components: [],

});

}

This handler deletes the spam message, sends a warning DM to the user, and logs the action. If the DM fails (user has DMs disabled), we continue anyway because the message deletion is what matters.

Now add the kick handler to the same file:

async function handleSpamKick(

interaction: ButtonInteraction,

messageId: string,

userId: string,

channelId?: string

): Promise<void> {

const guild = interaction.guild;

if (!guild) return;

// Check if bot has necessary permissions

const botPermissions = guild.members.me?.permissions;

if (!botPermissions?.has(PermissionFlagsBits.ManageMessages)) {

await interaction.followUp({

content: 'Bot lacks "Manage Messages" permission to delete spam.',

ephemeral: true,

});

return;

}

if (!botPermissions?.has(PermissionFlagsBits.KickMembers)) {

await interaction.followUp({

content: 'Bot lacks "Kick Members" permission to kick users.',

ephemeral: true,

});

return;

}

// Find the original message

const originalMessage = await findOriginalMessage(guild, messageId, channelId);

const channelName = originalMessage

? `#${(originalMessage.channel as TextChannel).name}`

: 'the server';

// DM the user before kicking

try {

const user = await interaction.client.users.fetch(userId);

await user.send({

embeds: [

new EmbedBuilder()

.setColor(0xff0000)

.setTitle('Removed from Server')

.setDescription(

`You have been removed from **${guild.name}** due to spam or unwanted self-promotion in ${channelName}.\n\nYour message violated our server rules against spam. If you believe this was a mistake, you may contact a server administrator.`

)

.setTimestamp(),

],

});

} catch (error) {

console.log(`Could not DM user ${userId}:`, error);

}

// Delete the original message

if (originalMessage) {

try {

await originalMessage.delete();

} catch (error) {

console.log(`Could not delete message ${messageId}:`, error);

}

}

// Kick the user

try {

const member = await guild.members.fetch(userId);

await member.kick('Spam/self-promotion');

} catch (error) {

console.log(`Could not kick user ${userId}:`, error);

}

// Delete from our database

deleteStoredMessage(messageId);

// Log the moderation decision

logModerationAction({

messageId,

userId,

action: 'spam_kick',

moderatorId: interaction.user.id,

});

// Update the embed

const originalEmbed = interaction.message.embeds[0];

const updatedEmbed = EmbedBuilder.from(originalEmbed)

.setColor(0xff0000)

.setTitle('🚫 Kicked for Spam')

.addFields({

name: 'Action Taken',

value: `User kicked and message deleted by <@${interaction.user.id}>`,

});

await interaction.editReply({

embeds: [updatedEmbed],

components: [],

});

}

The kick handler is the most severe action. It sends a final DM, deletes the message, kicks the user, and logs everything. The error handling ensures we continue even if parts fail.

Finally, add the helper function to find messages:

async function findOriginalMessage(

guild: import('discord.js').Guild,

messageId: string,

channelId?: string

): Promise<import('discord.js').Message | null> {

// If channel ID provided, try that first (fast path)

if (channelId) {

try {

const channel = guild.channels.cache.get(channelId) as TextChannel;

if (channel?.isTextBased()) {

const message = await channel.messages.fetch(messageId);

if (message) return message;

}

} catch {

// Fall through to full search

}

}

// Search through text channels to find the message (slow path)

for (const channel of guild.channels.cache.values()) {

if (!channel.isTextBased()) continue;

try {

const message = await (channel as TextChannel).messages.fetch(messageId);

if (message) return message;

} catch {

// Message not in this channel, continue searching

}

}

return null;

}

The button handlers include permission checks, only moderators can take action, and the bot verifies it has the necessary permissions before attempting to delete messages or kick users.

Main bot entry point

Finally, let's wire everything together. Create src/index.ts:

import {

Client,

Events,

GatewayIntentBits,

Message,

PermissionFlagsBits,

} from 'discord.js';

import { config } from './config.js';

import { initDatabase, cleanOldMessages } from './database/index.js';

import { initModels, saveMessage } from './database/models.js';

import { detectSpam } from './detection/index.js';

import { sendToModQueue } from './moderation/queue.js';

import { handleButtonInteraction } from './moderation/actions.js';

const client = new Client({

intents: [

GatewayIntentBits.Guilds,

GatewayIntentBits.GuildMessages,

GatewayIntentBits.MessageContent,

GatewayIntentBits.GuildMembers,

],

});

client.once(Events.ClientReady, (readyClient) => {

console.log(`Bot is ready! Logged in as ${readyClient.user.tag}`);

});

client.on(Events.MessageCreate, async (message: Message) => {

// Skip bot messages

if (message.author.bot) return;

// Skip DMs

if (!message.guild) return;

// Skip messages from users with mod permissions

const member = message.member;

if (member?.permissions.has(PermissionFlagsBits.ModerateMembers)) return;

try {

// Save message to database for history tracking

saveMessage({

id: message.id,

userId: message.author.id,

channelId: message.channel.id,

guildId: message.guild.id,

content: message.content,

createdAt: message.createdTimestamp,

});

// Run spam detection

const result = await detectSpam(message);

if (result.isSpam) {

await sendToModQueue(message, result);

}

} catch (error) {

console.error('Error processing message:', error);

// Don't crash the bot on individual message errors

}

});

client.on(Events.InteractionCreate, async (interaction) => {

if (!interaction.isButton()) return;

await handleButtonInteraction(interaction);

});

// Initialize database and prepared statements

initDatabase();

initModels();

// Schedule daily cleanup of old messages (runs every 24 hours)

const CLEANUP_INTERVAL = 24 * 60 * 60 * 1000; // 24 hours

setInterval(() => {

cleanOldMessages(config.historyDays);

}, CLEANUP_INTERVAL);

// Run cleanup once on startup

cleanOldMessages(config.historyDays);

// Graceful shutdown handling

const shutdown = async () => {

console.log('Shutting down gracefully...');

try {

await client.destroy();

console.log('Discord client closed');

process.exit(0);

} catch (error) {

console.error('Error during shutdown:', error);

process.exit(1);

}

};

process.on('SIGTERM', shutdown);

process.on('SIGINT', shutdown);

// Start bot

client.login(config.discord.token);

Notice the error handling because if spam detection throws an error, we log it but don't crash the entire bot. The cleanup job runs daily to prevent the database from growing indefinitely.

Testing your bot

Time to see it in action! Start the development server:

npm run dev

You should see:

Database initialized

Bot is ready! Logged in as YourBotName#1234

Testing spam detection

Post this message in your test server (from a non-moderator account):

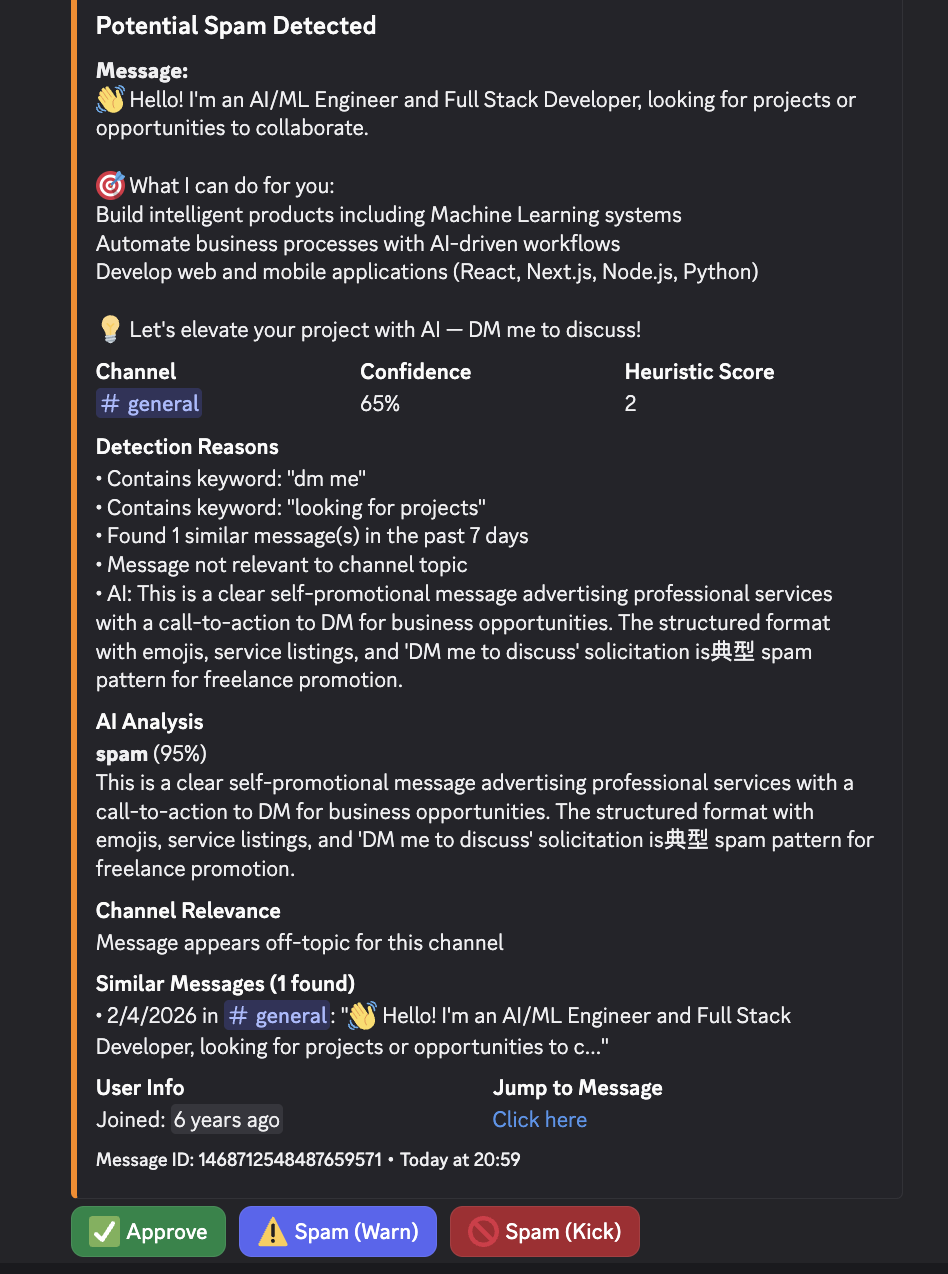

👋 Hello! I'm an AI/ML Engineer and Full Stack Developer, looking for projects or opportunities to collaborate.

🎯 What I can do for you:

- Build intelligent products including Machine Learning systems

- Automate business processes with AI-driven workflows

- Develop web and mobile applications (React, Next.js, Node.js, Python)

💡 Let's elevate your project with AI — DM me to discuss!

Within seconds, you should see a detailed report in your moderation channel showing:

- The detected spam patterns

- Heuristic score

- AI analysis explaining why it's spam

- Clickable buttons to take action

Try clicking "Approve" or "Spam (Warn)" to test the moderation actions.

Adjusting sensitivity

If you're getting too many false positives:

- Increase

HEURISTIC_THRESHOLD(default: 5) - Increase

AI_THRESHOLD(default: 0.7)

If you're missing obvious spam:

- Decrease these thresholds

- Add keywords to the

SPAM_KEYWORDSarray inheuristics.ts

What you've built

You now have a production-ready spam detection system that:

- Catches spam automatically using pattern matching and AI

- Learns from context using Claude to understand channel topics

- Tracks repeat offenders by storing message history

- Provides detailed reports showing exactly why something was flagged

- Gives moderators control with one-click actions

- Handles errors gracefully without crashing

- Cleans up after itself by removing old data automatically

The hybrid approach (heuristics + AI) keeps costs low while maintaining accuracy. As your community grows, you can fine-tune the thresholds based on actual spam patterns.

Next steps

Want to extend this bot? Here are some ideas:

- Whitelist trusted users who never get flagged

- Add custom keywords per server for different communities

- Implement rate limiting to catch rapid-fire spam

- Create a dashboard showing spam statistics over time

- Add auto-ban for repeat offenders

- Train a custom model on your moderation history

The moderation log database already tracks your decisions, it's perfect training data for a custom spam classifier!

Wrapping up

Spam moderation doesn't have to be manual drudgery. With a hybrid approach combining pattern matching and AI, you can catch 95%+ of spam automatically while keeping false positives under 1%.

The code from this tutorial is available on GitHub. I'd appreciate a star if you found this useful, or comment!